How to run Claude Code autonomously for hours without babysitting it

I left Claude Code running overnight on a real task. Testing AIdaemon, my personal AI agent, through Telegram's web interface. I checked on it during the day, let it keep going overnight, and by the next morning it had been running for over 27 hours. 84 tasks completed. It found bugs, fixed the code, retested, and moved on to harder tests. All without me touching anything. I'm on the Claude Code 20x plan, which gives you enough capacity to actually run sessions like this.

The setup that made this possible is three flags and a good prompt file.

Skip the permission prompts

Claude Code normally asks for confirmation before running commands or editing files. That's fine when you're sitting there. But for overnight runs, every permission prompt is a dead stop. The session just sits there waiting for you to click "allow."

claude --dangerously-skip-permissionsThe flag name is scary on purpose. It means Claude Code runs every tool call without asking. I wouldn't use this on a machine with production secrets. On a dev machine with a scoped task, though, it's what makes unattended runs possible.

Give it a browser

I needed Claude Code to interact with a web app. I was testing AIdaemon's Telegram bot through Telegram Web, and Claude Code alone can't do that since it lives in the terminal.

The --chrome flag connects it to Chrome through the Claude in Chrome MCP extension. It can navigate pages, click buttons, fill forms, read content, take screenshots. Combine both flags and you get something that can write code in the terminal and test it in the browser.

claude --chrome --dangerously-skip-permissionsThat's a lot to type every time. I set up a shell alias so cldc runs the whole thing.

In my case, Claude Code would send a message to AIdaemon through Telegram Web, read the response, decide if the agent did the right thing, and go fix the code if it didn't. Then try the same thing again to confirm.

Keep it running with Ralph Loop

If you just launch Claude Code with a big prompt, it finishes and exits. Or thinks it's finished and exits. That's fine for a quick task but useless for something that should take hours.

Ralph Loop is a Claude Code plugin that solves this. It installs a stop hook. When Claude tries to exit, the hook catches it and feeds the same prompt back in. Each iteration starts a fresh conversation with the same prompt, but Claude can see the current state of the files and git history. It figures out what's been done and decides what to do next. The name comes from the Ralph Wiggum technique by Geoffrey Huntley. The original idea was dead simple, a bash while true loop that keeps feeding a prompt file into an AI agent until it gets it right. Brute force meets persistence, like the Simpsons character who just keeps going no matter what. Anthropic liked it enough to ship a Ralph Wiggum plugin as part of Claude Code.

/ralph-loop "your task prompt here" --completion-promise "DONE"The --completion-promise is the only way out. Claude can only break the loop by outputting that exact string. You can set --max-iterations too, as a safety net.

Write a real prompt

The tools above are the machinery. But the prompt determines whether you get an hour of useful work or twenty-seven. "Test my app and fix bugs" gets you maybe an hour before Claude declares victory after one fix.

For anything serious, I write a markdown file. Architecture, goals, constraints, what "done" actually means. Then pass it to ralph-loop.

/ralph-loop "$(cat task-prompt.md)" --completion-promise "DONE"My prompt for the 27-hour session looked something like this.

# Task. Test and harden the AIdaemon Telegram agent

## Context

AIdaemon is a multipurpose AI agent accessible through Telegram.

The web interface is at https://web.telegram.org/k/#@aidaemon_coding_bot

## Goals

- Challenge the agent with progressively harder tasks

- Don't just test happy paths. Try edge cases, malformed input,

complex multi-step operations

- When something breaks, fix the underlying code

(not a band-aid for that specific case)

- Retest after every fix to confirm it works AND nothing else broke

## Architecture notes

(relevant file paths, how the agent processes messages,

key modules, database schema, whatever Claude needs)

## Success criteria

- All basic operations work reliably

- Edge cases handled gracefully

- Error messages are helpful, not cryptic

- No regressions from previous fixes

- Output DONE when all of the above is trueWithout architecture notes, Claude wastes iterations figuring out the codebase. Without clear success criteria, it doesn't know when to stop. Without the instruction to make generic fixes, it writes an if-statement for one specific input and moves on.

What happened over 27 hours

I ran the command and went to bed.

The first hour was basics. Simple messages to the bot, checking responses. Then it started escalating on its own. Multi-file refactoring tasks. Error recovery with broken inputs. It found a prompt injection vulnerability, wrote a defense, then tested a harder injection variant to check if the defense held.

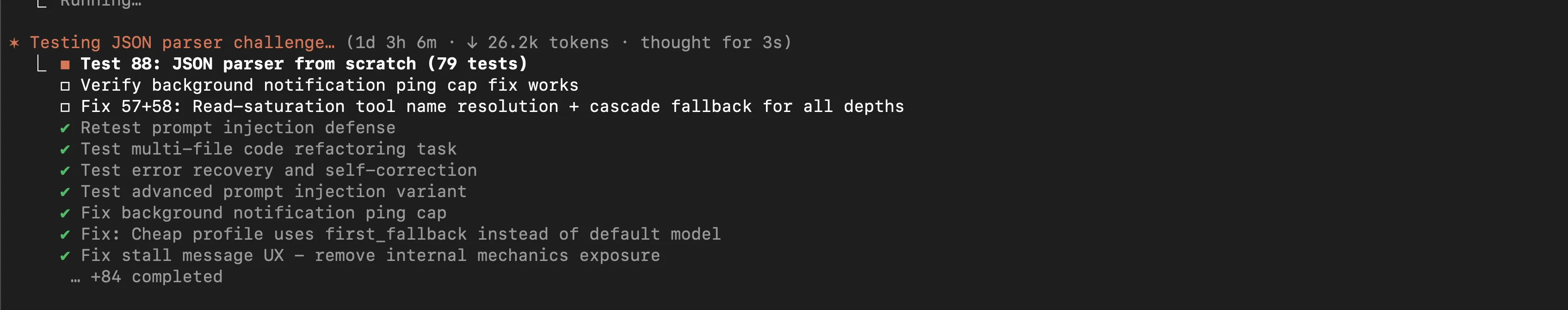

By test 88 it had the agent building a JSON parser from scratch with 79 test cases. Earlier it fixed background notification ping caps, resolved tool name resolution bugs, and caught a UX issue where internal mechanics were showing up in user-facing messages.

The fixes were real, not surface-level. The "cheap profile" was using first_fallback instead of the default model, so it fixed the configuration logic. Read-saturation tool name resolution was failing, so it added cascade fallback for all depths, not just the one that broke.

84 tasks total. Tests, fixes, retests. All autonomous.

What I'd share with someone trying this

I ran a few sessions with one-liner prompts before this one. They ran out of steam after an hour or two. The 27-hour session kept going because the prompt file had enough context for Claude to stay on track across dozens of iterations.

Telling Claude to make generic fixes instead of specific patches made a real difference. Without that, it writes the minimum code to make the current test pass. With it, the fixes actually prevented related bugs too.

Browser access caught things unit tests wouldn't. UI quirks, timing issues, formatting problems. --chrome let Claude do real end-to-end testing instead of just running code in isolation.

I reviewed all the changes after. Most were good. A couple were over-engineered, and one refactor touched more files than it needed to. But overall, dozens of real bugs found and fixed, each confirmed with a retest.

If you want to try it, install the Ralph Loop plugin, write a proper prompt file, and start small. --max-iterations 10 on a contained task. See how it goes before scaling up.